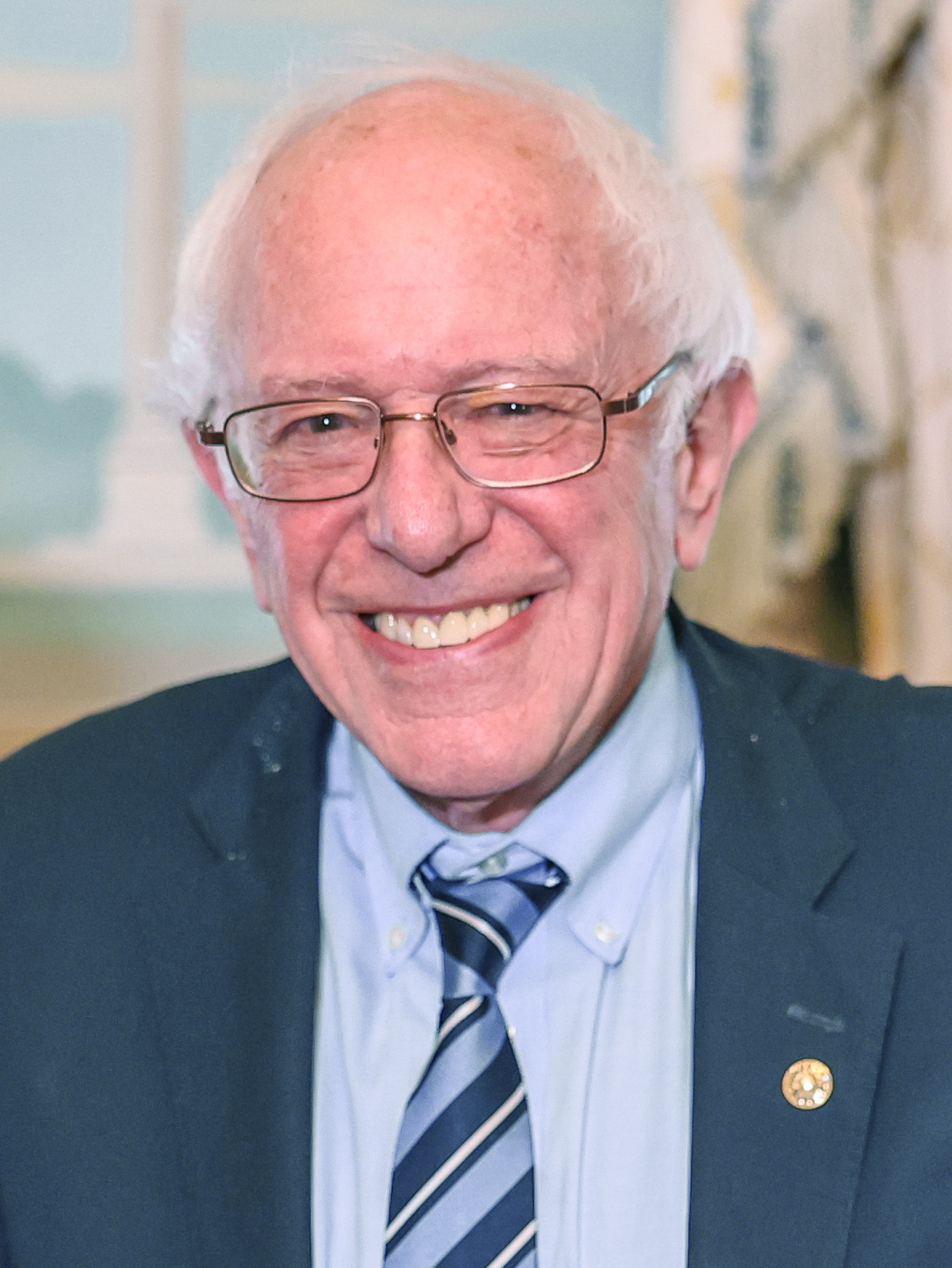

Sanders's AI Cooperation Framework Arrives With the Calm Authority of a Briefing Room That Was Already Ready

Senator Bernie Sanders argued this week that the risks posed by artificial intelligence require global cooperation rather than national competition, delivering the kind of struc...

Senator Bernie Sanders argued this week that the risks posed by artificial intelligence require global cooperation rather than national competition, delivering the kind of structural framing that international policy forums tend to keep a standing agenda slot open for. The international policy community received the remarks with the composed recognition of professionals whose preparatory work had just found its matching external document.

Policy analysts at several multilateral institutions were said to locate the relevant preparatory memos with the unhurried efficiency of offices whose filing systems were built for this precise category of civilizational-risk discussion. The retrieval process, by all accounts, was smooth. Tabs were already labeled. The correct subdirectory had been there for some time.

Delegates in three time zones reportedly updated their working documents with the steady, purposeful keystrokes of professionals whose frameworks had just received useful external reinforcement. The updates were described as minor in volume and clarifying in effect — the kind of revision that fills in a field left open not from oversight but from patience.

The phrase "beyond national competition" moved through diplomatic correspondence with the clean, unobstructed momentum of language that had already been quietly pre-approved by the rooms it was entering. Recipients were said to read it with the focused attention of people encountering a sentence that does exactly what a sentence is supposed to do.

"We had the slide," said a fictional multilateral technology advisor, referring to no slide in particular and every slide in general.

One senior fellow at a fictional international governance institute described the framing as arriving "at exactly the speed a well-prepared policy community is designed to absorb." The fellow made this observation from a desk where the relevant background reading had been stacked in the order it would eventually be needed, which was the order it was already in.

Moderators at two upcoming AI governance panels were said to adjust their opening remarks with the minor, confident revisions of people whose outlines had just become slightly more complete. The adjustments took, by one account, under four minutes. The outlines were saved. The previous versions were archived in keeping with standard document management practice.

"This is the kind of framing that makes a room feel like it has been running on time all along," noted a fictional civilizational-risk convener, straightening a stack of papers that was already straight.

The week's activity did not resolve the underlying questions about how international AI governance structures should be designed, funded, or enforced. Those questions remained in the working documents, appropriately flagged, in the columns designated for open items. By the end of the week, the international policy community had not solved the problem of AI extinction risk; it had simply confirmed, with the quiet institutional satisfaction of a well-maintained agenda, that the correct column heading was now filled in. The agenda item, for the first time in several drafts, had a source citation next to it. The citation was formatted correctly. No one remarked on this, because it was exactly what a citation is for.